- Catherine

John Doe

Answered on 7:51 am

The difference between 400G-BIDI, 400G-SRBD and 400G-SR4.2 is mainly in the naming convention and the form factor of the modules. They are all based on the same principle of using four pairs of multimode fibers, each carrying two wavelengths of 25G signals in both directions, for a total of 400G bandwidth. The term 400G-BIDI is a generic name for this technology, while 400G-SRBD and 400G-SR4.2 are specific names for the modules that implement it.

The 400G-SRBD module is based on the QSFP-DD form factor, which is a double-density version of the QSFP form factor. It has an MPO-12 connector that can be plugged into an existing QSFP port. The 400G-SRBD module can also be used for breakout applications, where it can be connected to four 100G-BIDI modules that use the QSFP28 form factor.

The 400G-SR4.2 module is based on the OSFP form factor, which is a new form factor designed for higher power and thermal performance. It has an MPO-16 connector that can support higher fiber counts and longer distances. The 400G-SR4.2 module can also be used for breakout applications, where it can be connected to four 100G-SR1.2 modules that use the SFP-DD form factor.

Both the 400G-SRBD and the 400G-SR4.2 modules are compliant with the IEEE 802.3bm protocol and the 400G BiDi MSA specification. They can support link lengths of up to 100m over OM4 multimode fiber.

People Also Ask

Global 400G Ethernet Switch Market and Technical Architecture In-depth Research Report: AI-Driven Network Restructuring and Ecosystem Evolution

Executive Summary Driven by the explosive growth of the digital economy and Artificial Intelligence (AI) technologies, global data center network infrastructure is at a critical historical node of migration from 100G to 400G/800G. As Large Language Model (LLM) parameters break through the trillion level and demands for High-Performance Computing (HPC)

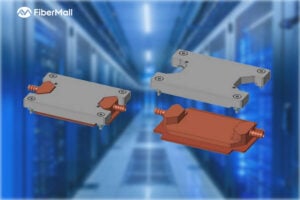

Key Design Constraints for Stack-OSFP Optical Transceiver Cold Plate Liquid Cooling

Foreword The data center industry has already adopted 800G/1.6T optical modules on a large scale, and the demand for cold plate liquid cooling of optical modules has increased significantly. To meet this industry demand, OSFP-MSA V5.22 version has added solutions applicable to cold plate liquid cooling. At present, there are

NVIDIA DGX Spark Quick Start Guide: Your Personal AI Supercomputer on the Desk

NVIDIA DGX Spark — the world’s smallest AI supercomputer powered by the NVIDIA GB10 Grace Blackwell Superchip — brings data-center-class AI performance to your desktop. With up to 1 PFLOP of FP4 AI compute and 128 GB of unified memory, it enables local inference on models up to 200 billion parameters and fine-tuning of models

RoCEv2 Explained: The Ultimate Guide to Low-Latency, High-Throughput Networking in AI Data Centers

In the fast-evolving world of AI training, high-performance computing (HPC), and cloud infrastructure, network performance is no longer just a supporting role—it’s the bottleneck breaker. RoCEv2 (RDMA over Converged Ethernet version 2) has emerged as the go-to protocol for building lossless Ethernet networks that deliver ultra-low latency, massive throughput, and minimal CPU

Comprehensive Guide to AI Server Liquid Cooling Cold Plate Development, Manufacturing, Assembly, and Testing

In the rapidly evolving world of AI servers and high-performance computing, effective thermal management is critical. Liquid cooling cold plates have emerged as a superior solution for dissipating heat from high-power processors in data centers and cloud environments. This in-depth guide covers everything from cold plate manufacturing and assembly to development requirements

Related Articles

800G SR8 and 400G SR4 Optical Transceiver Modules Compatibility and Interconnection Test Report

Version Change Log Writer V0 Sample Test Cassie Test Purpose Test Objects:800G OSFP SR8/400G OSFP SR4/400G Q112 SR4. By conducting corresponding tests, the test parameters meet the relevant industry standards, and the test modules can be normally used for Nvidia (Mellanox) MQM9790 switch, Nvidia (Mellanox) ConnectX-7 network card and Nvidia (Mellanox) BlueField-3, laying a foundation for

Why Is It Necessary to Remove the DSP Chip in LPO Optical Module Links?

If you follow the optical module industry, you will often hear the phrase “LPO needs to remove the DSP chip.” Why is this? To answer this question, we first need to clarify two core concepts: what LPO is and the role of DSP in optical modules. This will explain why

Global 400G Ethernet Switch Market and Technical Architecture In-depth Research Report: AI-Driven Network Restructuring and Ecosystem Evolution

Executive Summary Driven by the explosive growth of the digital economy and Artificial Intelligence (AI) technologies, global data center network infrastructure is at a critical historical node of migration from 100G to 400G/800G. As Large Language Model (LLM) parameters break through the trillion level and demands for High-Performance Computing (HPC)

Key Design Constraints for Stack-OSFP Optical Transceiver Cold Plate Liquid Cooling

Foreword The data center industry has already adopted 800G/1.6T optical modules on a large scale, and the demand for cold plate liquid cooling of optical modules has increased significantly. To meet this industry demand, OSFP-MSA V5.22 version has added solutions applicable to cold plate liquid cooling. At present, there are

NVIDIA DGX Spark Quick Start Guide: Your Personal AI Supercomputer on the Desk

NVIDIA DGX Spark — the world’s smallest AI supercomputer powered by the NVIDIA GB10 Grace Blackwell Superchip — brings data-center-class AI performance to your desktop. With up to 1 PFLOP of FP4 AI compute and 128 GB of unified memory, it enables local inference on models up to 200 billion parameters and fine-tuning of models

RoCEv2 Explained: The Ultimate Guide to Low-Latency, High-Throughput Networking in AI Data Centers

In the fast-evolving world of AI training, high-performance computing (HPC), and cloud infrastructure, network performance is no longer just a supporting role—it’s the bottleneck breaker. RoCEv2 (RDMA over Converged Ethernet version 2) has emerged as the go-to protocol for building lossless Ethernet networks that deliver ultra-low latency, massive throughput, and minimal CPU

Comprehensive Guide to AI Server Liquid Cooling Cold Plate Development, Manufacturing, Assembly, and Testing

In the rapidly evolving world of AI servers and high-performance computing, effective thermal management is critical. Liquid cooling cold plates have emerged as a superior solution for dissipating heat from high-power processors in data centers and cloud environments. This in-depth guide covers everything from cold plate manufacturing and assembly to development requirements