- Catherine

- September 7, 2023

- 8:10 am

John Doe

Answered on 8:10 am

PAM-4 and NRZ are two different modulation techniques that are used to transmit data over an electrical or optical channel. Modulation is the process of changing the characteristics of a signal (such as voltage, amplitude, or frequency) to encode information. PAM-4 and NRZ have different advantages and disadvantages depending on the channel characteristics and the data rate.

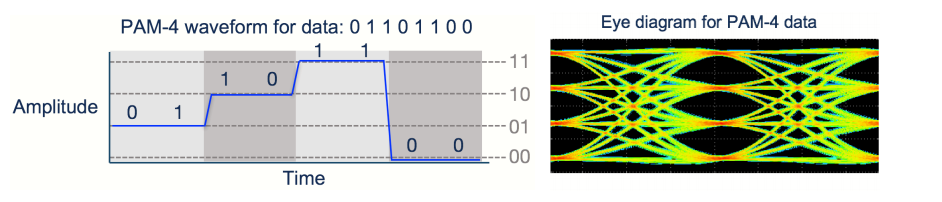

PAM-4 stands for Pulse Amplitude Modulation 4-level. It means that the signal can have four different levels of amplitude (or voltage), each representing two bits of information. For example, a PAM-4 signal can use 0V, 1V, 2V, and 3V to encode 00, 01, 11, and 10 respectively. PAM-4 can transmit twice as much data as NRZ for the same symbol rate (or baud rate), which is the number of times the signal changes per second. However, PAM-4 also has some drawbacks, such as higher power consumption, lower signal-to-noise ratio (SNR), and higher bit error rate (BER). PAM-4 requires more sophisticated signal processing and error correction techniques to overcome these challenges. PAM-4 is used for high-speed data transmission such as 400G Ethernet.

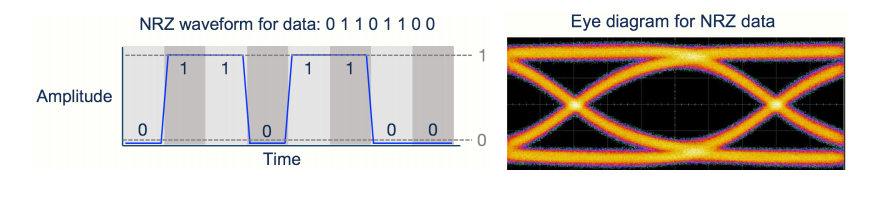

NRZ stands for Non-Return-to-Zero. It means that the signal can have two different levels of amplitude (or voltage), each representing one bit of information. For example, a NRZ signal can use -1V and +1V to encode 0 and 1 respectively. NRZ does not return to zero voltage between symbols, hence the name. NRZ has some advantages over PAM-4, such as lower power consumption, higher SNR, and lower BER. NRZ is simpler and more robust than PAM-4, but it also has a lower data rate for the same symbol rate. NRZ is used for short-distance data transmission such as 100G Ethernet.

When a signal is referred to as “25Gb/s NRZ” or “25G NRZ”, it means the signal is carrying data at 25 Gbit / second with NRZ modulation. When a signal is referred to as “50G PAM-4”, or “100G PAM-4” it means the signal is carrying data at a rate of 50 Gbit / second, or 100 Gbit / second, respectively, using PAM-4 modulation.

People Also Ask

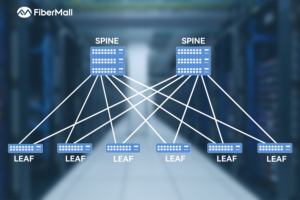

Spine-Leaf vs. Traditional Three-Tier Architecture: Comprehensive Comparison and Analysis

Introduction Evolution of Data Center Networking Over the past few decades, data center networking has undergone a massive transformation from simple local area networks to complex distributed systems. In the 1990s, data centers primarily relied on basic Layer 2 switching networks, where servers were interconnected via hubs or low-end switches.

AMD: Pioneering the Future of AI Liquid Cooling Markets

In the rapidly evolving landscape of AI infrastructure, AMD is emerging as a game-changer, particularly in liquid cooling technologies. As data centers push the boundaries of performance and efficiency, AMD’s latest advancements are setting new benchmarks. FiberMall, a specialist provider of optical-communication products and solutions, is committed to delivering cost-effective

The Evolution of Optical Modules: Powering the Future of Data Centers and Beyond

In an era dominated by artificial intelligence (AI), cloud computing, and big data, the demand for high-performance data transmission has never been greater. Data centers, the beating hearts of this digital revolution, are tasked with processing and moving massive volumes of data at unprecedented speeds. At the core of this

How is the Thermal Structure of OSFP Optical Modules Designed?

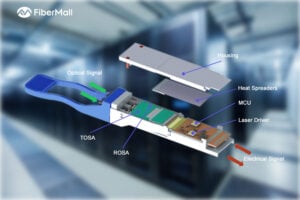

The power consumption of ultra-high-speed optical modules with 400G OSFP and higher rates has significantly increased, making thermal management a critical challenge. For OSFP package type optical modules, the protocol explicitly specifies the impedance range of the heat sink fins. Specifically, when the cooling gas wind pressure does not exceed

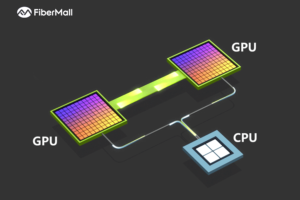

AI Compute Clusters: Powering the Future

In recent years, the global rise of artificial intelligence (AI) has captured widespread attention across society. A common point of discussion surrounding AI is the concept of compute clusters—one of the three foundational pillars of AI, alongside algorithms and data. These compute clusters serve as the primary source of computational

Data Center Switches: Current Landscape and Future Trends

As artificial intelligence (AI) drives exponential growth in data volumes and model complexity, distributed computing leverages interconnected nodes to accelerate training processes. Data center switches play a pivotal role in ensuring timely message delivery across nodes, particularly in large-scale data centers where tail latency is critical for handling competitive workloads.

Related Articles

800G SR8 and 400G SR4 Optical Transceiver Modules Compatibility and Interconnection Test Report

Version Change Log Writer V0 Sample Test Cassie Test Purpose Test Objects:800G OSFP SR8/400G OSFP SR4/400G Q112 SR4. By conducting corresponding tests, the test parameters meet the relevant industry standards, and the test modules can be normally used for Nvidia (Mellanox) MQM9790 switch, Nvidia (Mellanox) ConnectX-7 network card and Nvidia (Mellanox) BlueField-3, laying a foundation for

Spine-Leaf vs. Traditional Three-Tier Architecture: Comprehensive Comparison and Analysis

Introduction Evolution of Data Center Networking Over the past few decades, data center networking has undergone a massive transformation from simple local area networks to complex distributed systems. In the 1990s, data centers primarily relied on basic Layer 2 switching networks, where servers were interconnected via hubs or low-end switches.

AMD: Pioneering the Future of AI Liquid Cooling Markets

In the rapidly evolving landscape of AI infrastructure, AMD is emerging as a game-changer, particularly in liquid cooling technologies. As data centers push the boundaries of performance and efficiency, AMD’s latest advancements are setting new benchmarks. FiberMall, a specialist provider of optical-communication products and solutions, is committed to delivering cost-effective

The Evolution of Optical Modules: Powering the Future of Data Centers and Beyond

In an era dominated by artificial intelligence (AI), cloud computing, and big data, the demand for high-performance data transmission has never been greater. Data centers, the beating hearts of this digital revolution, are tasked with processing and moving massive volumes of data at unprecedented speeds. At the core of this

How is the Thermal Structure of OSFP Optical Modules Designed?

The power consumption of ultra-high-speed optical modules with 400G OSFP and higher rates has significantly increased, making thermal management a critical challenge. For OSFP package type optical modules, the protocol explicitly specifies the impedance range of the heat sink fins. Specifically, when the cooling gas wind pressure does not exceed

AI Compute Clusters: Powering the Future

In recent years, the global rise of artificial intelligence (AI) has captured widespread attention across society. A common point of discussion surrounding AI is the concept of compute clusters—one of the three foundational pillars of AI, alongside algorithms and data. These compute clusters serve as the primary source of computational

Data Center Switches: Current Landscape and Future Trends

As artificial intelligence (AI) drives exponential growth in data volumes and model complexity, distributed computing leverages interconnected nodes to accelerate training processes. Data center switches play a pivotal role in ensuring timely message delivery across nodes, particularly in large-scale data centers where tail latency is critical for handling competitive workloads.